Groovy script for dynamic response in Readyapi

Our scope of need is to get a dynamic response if the user hits the API request incorrectly and they need to get different response. I have created in readyapi rest, soap, and jdbc protocols with static responses when the user wants to access the response, but when the user requests with payload incorrectly example ( wrong I'd -xxxxx) , they receive the same response from readyAPI. I would like to create script to handle dynamic response; could you please share an example?Solved161Views0likes3CommentsReadyAPI cannot get token: No enum constant com.eviware.soapui.impl.rest.actions.oauth.OAuth2Paramet

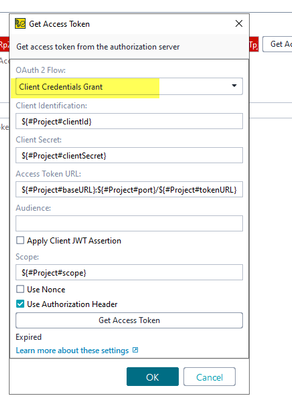

Hi there, I need help, my ReadyAPI was able to refresh token with no issue, but recently I cannot get any token. I got below error from the log: "No enum constant com.eviware.soapui.impl.rest.actions.oauth.OAuth2Parameters.PKCEChallengeMethod.Not defined" Me and our team are using Client Credential Grant and it has been working properly until recently. I don`t recall any change on the OAuth2 config So any idea how to fix this?? We need to fix this urgently. Thanks442Views0likes3CommentsData Source Data Generator step won't work when used via loadtestrunner.bat

I'm using a Data Source Data Generator stepto populate a value in subsequent step but for some reason it's not taken in account when used by loadtestrunner.bat ( and Performance TestRunner). At the same time when I use it in the UI everything works correctly. The DataGen step also works correctly when used in script mode (but I see it's deprecated and the suggestion is to use Data Generator of Data source). I attach the demo project where the issue is clearly visible (using Fiddler to sniff the API requests as I haven't found a way to debug request from TestRunner).225Views0likes0CommentsUnderstanding output of results

I am trying to understand the in-tool reporting of test results that I am seeing. I executed a test with Max Target Runs set to 200,000. The test ran for 5:04 minutes and the Test Step Metrics have the following: Data Source count: 194774 REST Request count: 194846 Test Case Level count: 200099 The REST request writes a record to a database table and in the table I have199995 records. I am not sure how to interpret this. They are all different counts and I don't know why. Can anyone help me understand this output better? I expected the test to execute 200,000 request so why then am I seeing less but the test case level is higher?308Views0likes2CommentsPerformance testing simultaneous requests

This is probably a dumb question but how can I go about performance testing thousands of simultaneous calls at once to observe how the system handles that? The performance/load/stress tests I've done with ReadyAPI in the past have been about number of requests over time with VUs. In this instance, I don't want say an hour long test run with the same request over and over, I want do test simultaneous calls. How can I go about this? Couldn't really find anything in the docs unless I missed it.744Views0likes7CommentsTrouble accessing data through the Edge Server in my API testing

Hey everyone! I've been working on API testing recently and encountered an issue related to the "Edge Server." I'm hoping someone here can shed some light on this problem. Background: I'm testing an application that relies heavily on an Edge Server for data retrieval. The Edge Server acts as an intermediary between the client and the actual server, improving response times by caching frequently accessed data and reducing the load on the primary server. The problem: While executing my API tests, I noticed that the data retrieved through the Edge Server is inconsistent. In some cases, the data matches the expected results, but in others, it seems outdated or even incorrect. This inconsistency is making it difficult for me to validate the API responses accurately. Troubleshooting steps taken so far: I have verified that my API requests are correct and include the necessary parameters. I have double-checked the test data on the actual server, and it is up-to-date. I have cleared my cache and restarted the application, but the problem persists. I have reached out to the development team responsible for maintaining the Edge Server, but I'm yet to receive a response. My questions: Is anyone else experiencing similar issues with an Edge Server during API testing? Are there any best practices or additional troubleshooting steps I should consider to ensure consistent data retrieval through the Edge Server? Is there a way to bypass the Edge Server www.lenovo.com/de/de/c/servers-storage/servers/edge/temporarily for testing purposes, so I can compare the results directly with the primary server? Any insights or suggestions would be greatly appreciated. I'm eager to resolve this issue and ensure reliable API testing. Thanks in advance!302Views0likes0Comments